“These guys are all accelerationists,” Daniel Keller said. I pretended to know what the word meant, hurriedly Googling as he waxed on forebodingly about people like Peter Thiel. This was nine years ago. At the time I was working at Vogue, and Keller—then known primarily as an artist—had already begun drifting toward the stranger edges of internet culture and technology. Over the years he’s moved further in that direction, becoming a venture investor at the intersection of AI, robotics, and crypto.

Recently I called Keller again, hoping for a cleanly optimistic case around AI and art. The internet is currently drowning in AI slop: from fashion campaigns to political propaganda, an endless churn of machine-generated content that seems designed mainly to satisfy the algorithms that distribute it. And Keller is known for, among other things, having coined the term “sloptimism.”

Surely, I thought, he’s someone who can explain why this moment might still produce something worthwhile.

Keller does make that argument—sort of. In his telling, “slop” may simply be the crude early stage of every new medium. Early film began by awkwardly imitating theater before it discovered the grammar of cinema. AI, he suggests, may be going through something similar. But Keller is no techno-utopian.

Jacob Mendel Brown: Let’s start with Sloptimism. What is it and where did it come from?

Daniel Keller: I’ve been a fan of portmanteaus as a way of predicting the future for a while. Part of my art practice when I was still making art was making portmanteaus and neologisms. They were part of this series called FUBU career captchas—portmanteaus of potential future jobs that were 3D printed as captchas to be theoretically unreadable by robots so they could be futureproof jobs. I was making those in like 2012 or 2013, way before this was a big part of the discourse.

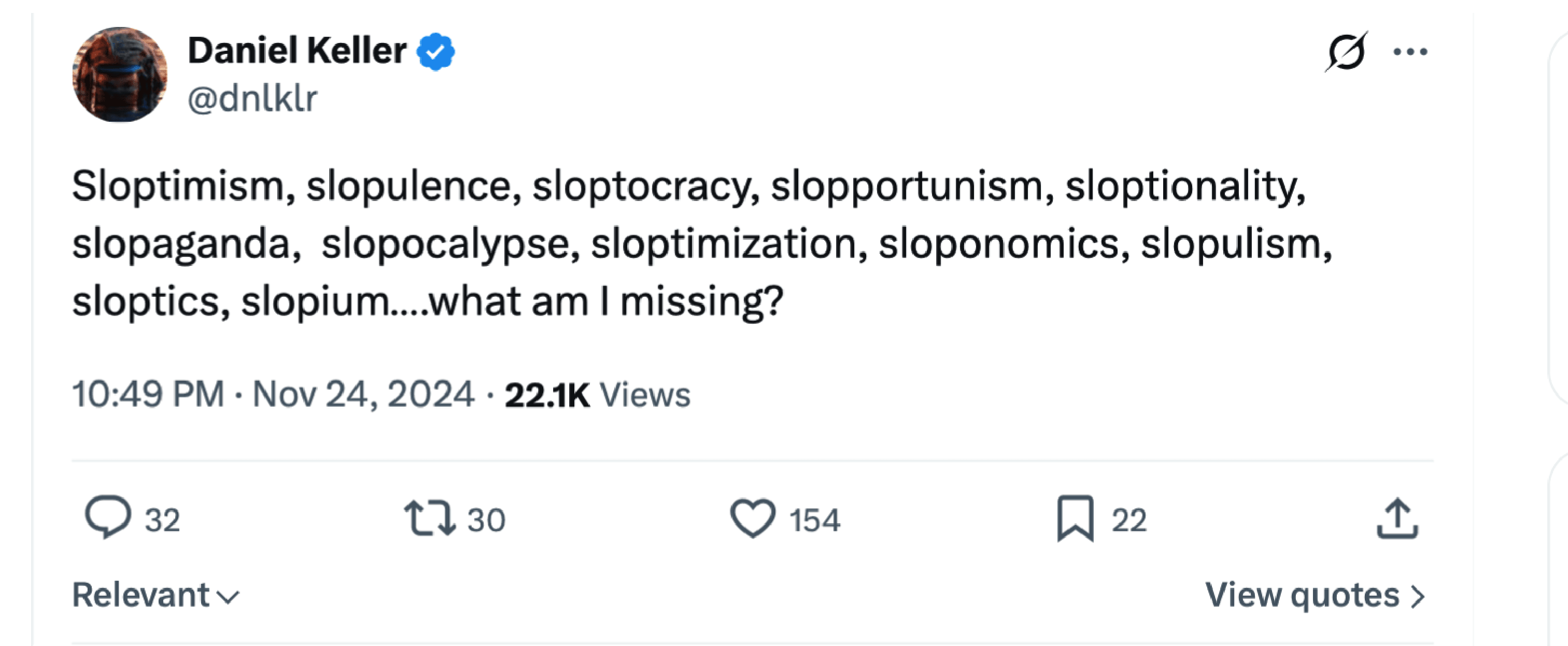

When I saw the word “slop” circulating I recognized how evocative it was. It felt like a good prefix or suffix. So I made a tweet with a huge list of slop portmanteaus. Sloptimism was the first one. I was thinking of it as a bit—just marking my territory. A test of whether you’ve coined something now is if you ask an AI who coined it — will I show up?

JMB: Before we get into the case for optimism or pessimism, how do you define slop?

DK: There are lots of definitions. It’s an abused term now — it means everything and nothing. But I had a tweet: content that appears in your feed solely because of interlocking attention-economy incentives.

So, it’s an algorithmic precipitate. Something that’s more a product of social media economics than generative AI itself. You don’t have slop without a feed. Slop is the precipitate of the algorithmic feed.

JMB: One of the interesting parts of your argument is that slop might actually be the beginning of something.

DK: I believe in scaling laws. When people call AI video slop in this superficial sense, like Oh, it looks unrealistic—that’s not a strong criticism because it won’t stay that way. Most cultural developments since the Renaissance are downstream of technological developments.

Perspective. Oil paint. Photography. Those technologies created new mediums. And there’s a pattern where a new medium appears and people react negatively. They think it’s slop. Then eventually a few people figure out how to make real art with it. And eventually it becomes codified as a developed form. So it kind of starts with slop and ends with opera.

"Perspective. Oil paint. Photography. Those technologies created new mediums. And there's a pattern where a new medium appears and people...think it's slop."

JMB: As you say that, it reminds me of early cinema, where people were basically filming stage plays.

DK: Exactly. There’s a skeuomorphic period you have to get through. That’s natural.

JMB: If AI art is currently in that early “filming stage plays” phase, what might the real medium eventually look like?

DK: There’s a new class of models called World Models. A lot of video-generation companies discovered about a year and a half ago that video is extremely useful training data for robots. If you can simulate lots of video, you can simulate lots of training data. Some of these models aren’t just outputting video; they’re generating video in real time.

So a World Model is a simulation where physics and causality are built into the model. Right now they’re still kind of dreamy interactive videos. But they’re getting close to being able to simulate things at the level of, say, Minecraft.

It’s early, but it’s going to turn into something like the Metaverse people talked about a few years ago, just realized on an individual level.

Something closer to lucid dreaming. Holodeck-level immersive simulations. I’m genuinely both terrified and excited about that.

JMB: Are these tools or does the artist slowly wither away?

DK: Even with video and audio generation and all this stuff, in the right hands they’re still just tools. The fear of artists becoming obsolete isn’t really founded yet.

I was playing with Suno, an AI music generator, earlier today. It’s awesome. The space between listening to music and creating music is becoming really interesting. It’s hard to dismiss that entirely as slop.

JMB: What about deepfakes?

DK: I expected deepfakes to become a problem years earlier than they did. So at first it was like, Oh, I guess this didn’t end up being a problem, people can parse them.

But recently something shifted. The technology matured kind of around the latest wave of Sora releases, like, in August. Especially when they started really optimizing for videos that look like reels.

I saw a video of a supposed school principal with a baseball bat yelling at ICE agents outside a school. It looked like New York. If you looked closely you could still see artifacts. But people were sharing it instantly.

These weren’t old people; it was people my age. People seem increasingly unable to parse truth and fiction. That’s hard to be optimistic about.

JMB: Scared to ask, but how do you see society at large being affected by AI?

DK: There’s an inevitability of us transitioning into a post laborbottlenecked economy. There might still be jobs, but labor won’t be the main bottleneck anymore.

The bottleneck becomes energy and minerals again. It becomes more like a metabolic process without humans playing the linchpin role—an automated system where energy, data, and materials flow through without human labor as the constraint.

JMB: So what will humans do? I mean we have to do something.

DK: There’s a window where people can increase their productivity dramatically. Basically leveraging AI. It’s a kind of centaur phase. Half man, half machine. So if you want to, resist or avoid it. Okay. But you’re gonna have worse outcomes, probably, than people who at least understand it better.

JMB: But eventually you’re saying traditional jobs disappear?

DK: I’m not sure there will be space to find a job as we know it. But I do believe there will continue to be status games. Or increasingly other things—that might seem absurd—to spend your time on. I mean the idea of being a podcaster would have seemed absurd to our grandparents. I’m sure there will be things like that.

The agent-to-agent robo economy is going to so utterly dwarf our current economy that they could probably just give us Fully Automated Luxury Communism as a PR expense.

JMB: You introduced me to accelerationism years ago. How does that shape how you see this moment?

DK: I consider myself a UAC, an unconditional accelerationist. Acceleration is a self-propelling process that isn’t really steered by any individual’s desire or will. It’s almost a geological process. My optimism is basically that we don’t have a choice. Most of these things are baked in.

JMB: In that first conversation you were tipping me off to right-leaning accelerationists, the guys now in power. Are they winning? How scary is this all?

DK: I would say right accelerationists are as mistaken as left accelerationists. It’s the same mistake of thinking this is a process that’s responsive to human will. I’m not a doomer in the sense that I think by default this ends badly for us. I don’t think that super intelligences are going to be some evil alien mind that thinks of us like insects.

Basically resistance is futile. But we’ll have a better chance of better outcomes if we have open minds and engage with technology and use it, regardless of what about us it makes obsolete.

JMB: If the process is inevitable, what role do people still have?

DK: You participate in the process. The minds that are getting created are literally trained on everything people publish. So publish. Even if no human ever reads it, you might end up being part of some kind of infinite history that way. And that could be very high leverage.